Edge Learning Lab

Edge Learning Lab is a unique environment that enables professionals and students from our partners to explore the possibilities and limitations of decentralized and edge learning.

Edge learning is recognized as one of the most promising areas of innovation in AI, breaking down centralized storage and compute structures into distributed solutions. AI Sweden’s Edge Learning Lab draws together technology developers with industry partners focused on solving real-world problems and global researchers who share an interest in sparking innovations in domains such as telecom, finance, mobility, health, manufacturing, agriculture, and retail. The lab is a unique testbed with state-of-the-art hardware and software, designed to enable developers, data scientists, students, researchers, and other users to explore and learn about edge learning and pioneer new research questions.

If you are interested in becoming a partner of AI Sweden, and getting access to the partner benefits, including the Labs, please feel free to reach out.

What is edge learning?

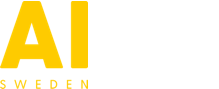

Decentralized AI and edge learning refer to moving intelligence and learning out to different devices and organizations. We can train machine learning models on locally available (i.e. decentralized) data and make local decisions. The approach enables combining knowledge from several local datasets, without distributing the actual raw data between devices, locations, and organizations. Unlike algorithms trained on a centralized dataset, decentralized learning distributes models rather than the data itself.

In federated learning, local models trained in edge devices are typically aggregated at a central location.

- Create initial model

- Transmit current model to devices

- Training on devices using local data

- Transmit local model parameters to aggregator

- Aggregate parameters to create updated model

- Send latest model for further training or deployment

In swarm learning, one of the edge devices is also used as an aggregator.

- Devices register on network

- Devices receive initial model

- Devices train model on local data

- Devices share and merge models

- Repeat steps 3-4 until satisfied

What are the benefits of edge learning?

Traditional centralized AI applications have evolved over the past few decades to

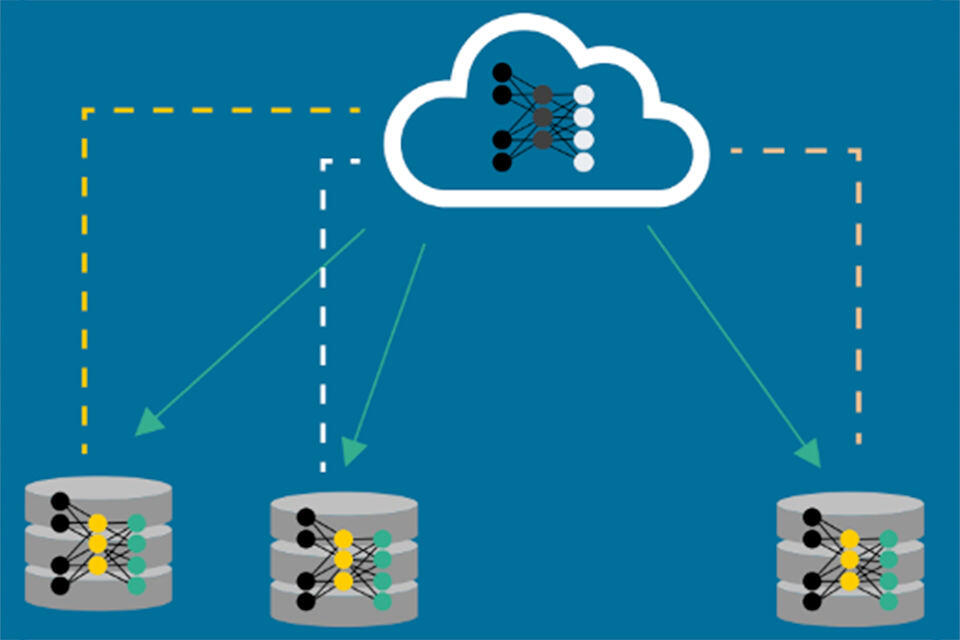

reshape how we live and move. We are familiar with voice assistants (e.g., Siri, Alexa, and Google Assistant). Active driver assistance and automated driving features are increasing in prevalence to heighten safety and reduce the load on drivers. The same trend is visible in healthcare, where assisted diagnostics are used to assist physicians in identifying and correctly diagnosing medical conditions.

Topics such as AI ethics, data privacy, data security, data ownership, data transfer, computing, and storage costs are concerns for businesses, the public, and governments. Countries have begun to create policies that enhance local vs. global learning (e.g., Chinese data protections) or otherwise limit the long-term shared open use of data. At the same time, stakeholders are increasingly worried about AI bias and argue strongly for the need for more diversified and less restrictive modeling.

By moving learning to the edge, organizations can begin to collaborate globally while addressing concerns around data security, data privacy, and data transfer barriers. At the same time, they benefit from leveraging otherwise unused computational resources to solve growing problems with the shortcomings of data-starved modeling.

Edge learning lab, AI Sweden office in Gothenburg

Projects

DataRätt InnoVation (DRIV) 2021-2023

Federated Fleet Learning

Federated Learning In Banking

Regulatory Pilot Testbed

SpaceEdge

SpaceEdge 2

Contact

Team